Who is Doing Policy Research on AI & Cybersecurity? A Look Inside the Think-Tank Landscape

Executive Summary

Barely a week passes without a policymaker, cybersecurity company or regulator citing artificial intelligence as the next big disruptor of cyber-security – sometimes as a silver bullet, other times as an existential threat. Marketing white-papers frequently promise predictive defences that out-maneuver ransomware gangs; keynote speeches warn of autonomous malware that learns on the fly. In policy circles, “AI and cyber” has become a set-phrase, invoked as though a fully formed research field already exists.

Yet, when we looked more closely at who is producing sustained analysis, shaping terminology, and offering guidance to governments, the picture turned out to be surprisingly thin. A great deal of the public debate is running on anecdote and aspiration rather than cumulative evidence.

This report looks at how much research has been published on the intersection of artificial intelligence and cybersecurity by policy think tanks. It starts by measuring overall output, then identifies which organisations are publishing, what they are publishing, and what patterns emerge from this body of work. The goal is to understand how well the current policy literature reflects the growing policy interest in AI and cybersecurity.

We find that the total volume of substantive publications is small – a little over forty reports, briefs or articles across the past decade. Only twelve of the 111 leading national-security think tanks have published in this space, and much of the work comes from a small group of newer, more technical institutes, mostly in the United States. Larger, traditional think tanks have contributed little.

This suggests that the current research base is still emerging and largely focused on near-term developments. Most publications examine how AI enhances existing cyber operations – by improving targeting, scaling intrusion techniques, or automating tasks – rather than exploring fundamentally new forms of cyber threat. Social engineering attacks, such as phishing and the use of deepfakes, receive the most attention, alongside concerns about the security risks introduced by AI systems themselves. In-depth studies, particularly outside the United States, remain limited.

If governments want more robust policy advice, they will need to support deeper, technically grounded, and globally distributed analysis – not only of how AI is currently used, but also of how it may reshape the cybersecurity landscape over time.

Read the full report

Introduction

In February 2024 an accounts officer in the Hong Kong office of the British engineering giant Arup joined what looked like a routine Microsoft Teams call. On-screen were the firm’s chief financial officer in London and half-a-dozen colleagues from other regions, all discussing a confidential acquisition that - so the CFO explained - required a series of same-day transfers. The faces moved naturally, the voices carried familiar inflections, and the conversation flowed well enough to dissolve the officer’s initial caution. Over the next few hours she executed 15 wire payments, sending HK $200 million (≈ US $25 million) to five local bank accounts. Only after she followed up with head office did she learn that every other participant on the call had been an AI-generated deepfake - sound, video and even minor mannerisms conjured from open-source tools and publicly available footage.

The financial loss was serious, but the incident also highlighted something broader: the growing convergence of artificial intelligence (AI) and cybersecurity. Deepfakes, AI-assisted phishing, and automated reconnaissance are no longer speculative threats - they are part of the operating risk environment for organisations of all sizes. Governments have taken note. AI and cybersecurity now feature prominently in national strategies, policy speeches, and institutional reforms. Yet, it is less clear how this surge in high-level government interest has translated into detailed analysis or guidance from the think tanks that often inform public debate and government planning.

Laying the groundwork for unpacking the intersection of AI and cybersecurity, this report takes stock of how the policy research community has engaged with the topic. We conducted a systematic review of all accessible publications from the leading national security think tanks, science and technology institutes, and emerging AI-focused centres.2 Our aim was to understand who is publishing on the AI–cybersecurity nexus within the think tank space, what kinds of issues they are addressing, and where the gaps lie.

The findings are mixed. While a few emerging tech specialised think tank organisations have produced serious, often insightful work, the overall volume of analysis remains low, and engagement by mainstream think tanks is limited. What results is a research landscape that is narrow, uneven, and increasingly shaped by a small group of agile, primarily US-based institutions. The outputs we examined tend to focus on high-level concerns - such as the offensive use of AI by cyber actors, or the vulnerability of AI systems - rather than detailed technical analysis or operational case studies. Few studies go beyond speculative scenarios to offer evidence-based assessments or actionable insights.

Several important areas are notably underexplored. There is little attention to how human operators interact with AI tools in high-stakes cybersecurity environments - for example, how trust, cognitive load, and decision-making are shaped by reliance on AI under pressure and uncertainty. Many publications also assume idealised conditions, with limited consideration of how cyber actors actually operate in real-world, adversarial environments.

Intrinsic vulnerabilities of AI systems, such as those stemming from flawed training data, model brittleness, or misaligned optimisation goals, are mentioned, but not systematically analysed. As a result, the current body of work tends to outline broad risks rather than offering structured guidance. Still, mapping these general viewpoints - and identifying areas of consensus or divergence - provides a useful starting point for understanding how the policy research community is beginning to engage with the AI-cybersecurity nexus.

Methods and Scope of Research

Our starting point was the 2020 Global Go To Think Tank Index compiled by the Think Tanks and Civil Societies Program (TTCSP) at the University of Pennsylvania’s Lauder Institute, the most recent edition available at the time of writing.3 From the index we drew the entire cohort of 111 organisations rankedunder “Defence and National Security.” We treated these institutes as the core population against which to measure engagement with the AI–cybersecurity nexus, on the assumption that national-security think tanks are structurally positioned to shape - and to be consulted on - policy in this domain.

For each institute we reviewed every publicly accessible output - reports, policy briefs, blog posts, and conference papers - published between 1 January 2015 and 31 December 2024. Collection relied on a combination of site-restricted web searches, internal publication archives, academic databases, and RSS feeds, using paired keyword strings such as “artificial intelligence AND cybersecurity,” “machine learning AND cyber operations,” and “AI system vulnerability.” Whenever a document appeared in multiple formats (for example as a PDF and an HTML summary) it was counted once.

A publication was considered “substantive” when its central purpose was either to analyse how specific AI techniques transform offensive or defensive cyber practice, or to examine the cybersecurity weaknesses (or advantages) introduced by deploying AI systems themselves. To preserve that focus we set aside material that mentioned cybersecurity only in passing, treated AI as an abstract future risk without technical detail, or discussed AI-generated content (for instance deepfakes) purely in the context of political disinformation rather than network security. We likewise excluded broad surveys of national-security trends in which cyber issues occupied only a minor subsection. All screening decisions were made jointly by two researchers and any disagreement was resolved through discussion, ensuring a consistent application of the criteria across the dataset.

Because technological expertise is not restricted to defence institutes alone, we repeated the same search protocol for organisations listed in the TTCSP category “Top Science and Technology Policy Think Tanks.” Finally, in recognition of the rapid proliferation of AI-focused research centres that fall outside traditional rankings, we complemented the index-based sampling with a snowball search: citations, conference agendas, and professional-network referrals led us to additional institutes whose English-language publications met the same inclusion bar. This three-step approach - index-driven sampling, decade-long publication sweep, and carefully delimited inclusion criteria - provides a systematic, replicable view of which policy organisations have produced sustained analysis at the intersection of artificial intelligence and cybersecurity.

The full list of think tank publications can be downloaded here.

Who is publishing what

We find that only twelve of the 111 national security think tanks ranked by TTCSP have published substantive work focused on the intersection of artificial intelligence and cybersecurity over the past decade. This figure - roughly eleven percent - includes all formats, from commentary, to policy briefs to long-form reports. Notably, six of these eleven also appear on TTCSP’s list of top science and technology policy institutes.

When the scope is widened to include organisations not listed by TTCSP, a notable pattern emerges. Seven additional institutes emerge, five of which were founded after 2005. This means that nearly half of the institutions publishing on the AI–cyber nexus are either young or unranked, indicating that much of the current work in this space is being driven by newer, more agile think tanks.

Table 1: Number of AI-Cybersecurity publications per think tank

| CSET Georgetown | United States | 15 |

| Atlantic Council | United States | 3 |

| Wilson Center | United States | 3 |

| RAND Corporation | United States | 3 |

| Alan Turing Institute | United Kingdom | 2 |

| Belfer Center for Science and International Affairs | United States | 2 |

| Council on Foreign Relations (CFR) | United States | 2 |

| Institute for Security and Technology | United States | 2 |

| Australian Strategic Policy Institute (ASPI) | Australia | 1 |

| Brookings Institution | United States | 1 |

| Carnegie Endowment for International Peace | United States | 1 |

| Centre for European Policy Studies (CEPS) | Belgium | 1 |

| Centre for International Governance Innovation | Canada | 1 |

| Future of Life Institute | United States | 1 |

| GLOBSEC Policy Institute (GPI) | Slovakia | 1 |

| Innovation Technology & Innovation Foundation | United States | 1 |

| Institute for Defense Analyses (IDA) | United States | 1 |

| Peace Research Institute Oslo (PRIO) | Norway | 1 |

| Royal United Services Institute (RUSI) | United Kingdom | 1 |

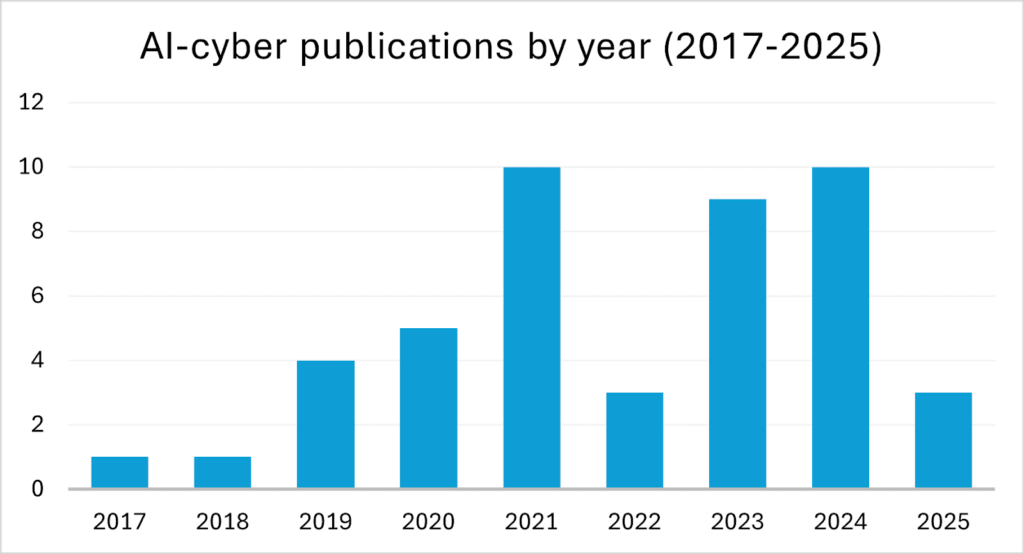

Globally, the output remains modest. Across all identified institutions - ranked and unranked - we find a little over forty substantive publications between 2017 and 2024. This is a surprisingly low number given the rhetorical prominence of “AI and cyber” in national strategies, speeches, and policy roadmaps over the same period.

The geographic concentration is notable. The majority of publications originate in the United States, with only a handful produced in the United Kingdom and a scattering from other countries (the majority of think tanks listed in the TTCSP list is also based in the United States). Authorship, too, is concentrated: one organisation - Georgetown’s Center for Security and Emerging Technology - accounts for over a third of all outputs. Institutes that bridge both the security and technology policy domains generate a disproportionately high share of the literature, despite representing only a minority of the total institutions reviewed.

Publication rates rise over time, with an uptick beginning in 2020, a brief lull in 2022, and renewed momentum in 2023–24. It is not immediately clear to what extent the waves of policy research activity align with major AI developments, such as the release of OpenAI’s GPT-3 in mid-2020, the launch of ChatGPT in late 2022, and the release of GPT-4 in March 2023. Yet this growth is led almost entirely by small, specialised institutes rather than established defence think tanks.4 There appears to be some correlation, but it is not especially pronounced.

What emerges is a policy research landscape that remains relatively narrow in scope, dominated by US-based institutions, and shaped largely by a small group of technically oriented, fast-moving organisations.5 Many established national security think tanks have not engaged with this space, likely because cybersecurity itself falls outside their traditional areas of focus and lack of combined expertise. Whether they will seek to play a more active role in the AI–cybersecurity conversation remains uncertain. Without new partnerships, funding models, or internal shifts, they may find themselves increasingly peripheral as newer actors define the terms of debate.

While headlines often portray AI as either a silver bullet or a looming existential threat, the current reality is more nuanced. Three key discussion points emerge from our analysis, including shared observations, as well as areas of ongoing debate.

1. AI increases the efficiency of offensive cyber operations, but it doesn’t introduce entirely new types of threats.

Think tank outputs vary in how they interpret AI’s significance. Some adopt ambitious rhetoric, describing AI as a “revolutionising” force poised to fundamentally reshape the cyber threat landscape.6 However, a more prevalent narrative present among these think tank outputs is a more measured and critical tone; one that emphasises incremental progress and cautioning against overhyping AI’s capabilities.7 Across multiple expert analyses, the common insight thus emerges that AI is evolutionary rather than revolutionary.8

At present, AI does not make attackers "smarter," it currently primarily automates routine, low-level operations and previously time-consuming actions. While threat actors may use AI to generate convincing and increasingly personalised phishing lures, or stimulate legitimate communications, these actions are not novel, but long standing tactics now executed with greater efficiency.9 Here, we examine several areas of cyber operations that policy writing often identifies as being affected by AI.

First, it is frequently pointed out that threat actors are taking advantage of AI-driven reconnaissance, significantly speeding up cyber-attack planning. AI-driven real-time scanning and probing of networks allows threat actors to maintain constant surveillance and expose vulnerabilities in networks.10 Adversarial Machine Learning (AML), a type of machine learning (ML) which learns by trial and error, can also be used to find vulnerabilities in software. Fuzzing, a method of identifying software bugs by inputting random data, is becoming more efficient with the application of ML.11 A fuzzer, the tool used in this process, tests for flaws by flooding the target software with numerous inputs and analysing the resulting behavior. These inputs may be entirely random or specifically tailored to the characteristics of the software under examination. AML-powered fuzzers enhance vulnerability discovery by iteratively generating and refining inputs that cause software crashes, making the search for weaknesses more focused and effective. AI-enhanced fuzzing tools thereby speed up the discovery of zero-day vulnerabilities (previously unknown software flaws) and can help identify fuzz targets (functions that test specific pieces of code), leading to improved code coverage over time.12 Adding to this, Supervised Machine Learning (SML), a type of ML which learns from labeled examples (e.g., “spam” vs. “not spam”), can help attackers and defenders decide which vulnerabilities matter most and where to focus their efforts.13

Alongside identifying bugs in software, it is argued that threat actors can now tailor attacks with greater accuracy as intelligent profiling (via social media and public data) and AI-driven, automated data collection allows for more targeted victim selection, especially for social engineering attacks like phishing. ML models can analyse and organise information - personal, professional, and technical information from public sources, like social media platforms, company websites, online forums, but also public breach data and dark web leaks - gathered by web crawlers and scrapers to build detailed profiles of individuals and organisations.14

Second, social engineering is said to be amplified by the capabilities of AI. This shift is largely driven by the growing prevalence of Generative Learning, most often referred to as Generative AI (GenAI), a subset of ML designed to create new content, which can take the form of text, images, audio or videos, based on patterns the models have learned from large datasets. Though not the only subset of AI to do so, GenAI models distinguish themselves from traditional AI techniques, which simply classify or analyse data. GenAI systems like Large Language Models (LLMs) and image generators are designed to generate novel content by learning and replicating patterns from massive datasets.

GenAI has the ability to greatly improve how attackers deceive people, especially in the delivery phase of an attack, the stage where they try to get someone to open a malicious file, click a link, or install harmful software. Traditionally, social engineering relies on manipulating human psychology to deceive individuals into revealing sensitive information or performing harmful actions. With the advent of GenAI, threat actors can now automate and personalise these attacks on a much larger scale. Alongside its reconnaissance enhancing capabilities, GenAI is already widely being used by threat actors to craft realistic phishing emails and synthetic media, including images, audio, or videos, the latter also known as deepfakes.15

Third, several think tanks note that enabling AI coding assistants to generate, review, and even translate code - for example, code that finds, encrypts, and deletes files - significantly streamlines the development of malicious software by cyber adversaries. By automating repetitive tasks, LLMs make writing malicious code more time-efficient, improve how code is understood (especially legacy code), and help spot bugs or security problems early on.16

A potential impact of AI on the complexity of malicious code lies in the capabilities of LLMs to assist in the creation of polymorphic malware. Unlike traditional malware, which can often be identified through signature-based methods, polymorphic malware changes its file names, internal structures, and even encrypts parts of itself with different keys on each execution, rendering conventional detection techniques ineffective. AI tools, particularly LLMs, can be used to generate countless malware variants that retain the same malicious functionality but differ enough in structure to bypass signature-based detection systems.17 For example, some malware can mutate itself using AI to stay undetectable by antivirus software. These mutations can be refined over time by AML models to stay effective.18 However, modern security tools use more than just code signatures, so this type of malware isn’t necessarily “undetectable.” There’s potential for GenAI to contribute to more autonomous, adaptive malware in the future. But for now, GenAI is just one tool that might enhance advanced cyber operations led by humans.19

Relatedly, various think tank reports observe that skilled attackers can use GenAI tools to help speed up the creation of malware code, including adding features to avoid detection. However, some note that inexperienced users are unlikely to benefit much, since they need to understand what to ask for and how malware works.20 When GenAI is used to make malware harder to detect, it is usually because a human expert specifically told it how to do so.

Fourth, attackers conducting cyber operations often face the challenge of maintaining covert communication with compromised systems to issue instructions, known as command and control (C2) traffic, without triggering detection mechanisms. To this end, adversaries can use ML to understand how networks behave and craft attacks that blend in to avoid being noticed.21 As Ben Buchanan notes, by analysing and replicating typical patterns in communication such as timing, structure, and content, GenAI enables adversaries to disguise malicious instructions as innocuous data exchanges, significantly reducing the likelihood of detection.22

Highlighted reading: AI in cyber offense

The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense‑Defense Balance23

Jennifer Tang, Tiffany Saade & Steve Kelly (Institute for Security and Technology)

October 2024

AI in cybersecurity increases speed, scale, and efficiency, as well as introduces new risks. Jennifer Tang, Tiffany Saade & Steve Kelly argue that defenders currently hold an advantage <due to their control of internal systems and early adoption. Yet where defenders benefit from “home field” advantages like proprietary code access, deep network knowledge, and a strong ecosystem of AI-enhanced security service, attackers are also gaining significant AI capabilities. The authors report five key premises of AI in cybersecurity; (1) revolutionizing content analysis; (2) challenging user authentication and human-to-human interactions; (3) enhancing code writing, reviewing, and vulnerability detection; (4) enhancing cybersecurity operations; (5) increasing efficiency and effectiveness of adversarial reconnaissance and target identification. Among both offensive and defensive actors it is those that embrace AI early and invest in innovation that stay ahead in the cybersecurity “arms race.”

Hacking with AI: The Use of Generative AI in Malicious Cyber Activity24

Maia Hamin & Stewart Scott (Atlantic Council)

February 2023

Focusing on the impact of Large Language Models (LLMs) on cyber attacks, Hamin & Scott map LLM capabilities to the different phases of the cyberattack lifecycle, distinguishing between how such tools could help low-skill attackers scale activities like phishing or open-source intelligence, and how they might aid sophisticated actors comparatively. The authors conclude that LLMs are best seen as augmentation tools that improve existing workflows and reduce the skill threshold required for launching attacks. The authors also stress that the potential for LLMs to independently discover zero-day exploits or execute multi-stage intrusions autonomously remains speculative and unsupported by current empirical evidence.

2. AI is a force multiplier to both cyber offense and defence

While AI may enhance the efficiency of offensive cyber operations, it is also seen as having significant defensive potential - and thus it is often described as a force multiplier for both offense and defense in the think tank literature.25 These outputs also note that this dual boost is driving an AI-led 'arms race', with each side racing to build systems that can out-predict and counter the other’s next move.26

It is said that AI can enhance cyber defense in a number of ways. First, modern networks generate massive volumes of data, logs, network activity, user behaviour, impossible to analyse through human activity, let alone in real time. AI addresses this challenge by filtering out irrelevant data, identifying anomalies, and highlighting suspicious patterns.27 ML-enabled systems are reported to be increasingly able to detect never-before-seen malware by recognising underlying patterns that signal malicious behaviour.28 Moreover, as noted in the previous section, by leveraging its ability to identify complex patterns across vast datasets, AI enhances automated vulnerability testing, reducing reliance on manual oversight.29 Third, AI may also speed up the rewriting of older, less secure programming languages (like C or C++) into safer ones (like Rust).30

That said, a major difficulty lies then in the fact that AI models used in cyber defence must be regularly retrained or updated to remain effective. Joshua Steier et al., in a RAND report, highlight a distributional shift, a mismatch between the data an AI system was trained on and the data it encounters in real-world environments. When such a shift occurs, model performance can degrade sharply, leading to missed detections or false positives, thereby undermining trust in automated defences.31 This lag in adaptation might create an asymmetric advantage for attackers. In one of various CSET outputs, Ben Buchanan et al. also highlight that organisations with little resources or existing capacity in cyber defence will have a harder time keeping up with the increased, AI-driven sophistication of adversaries, whereas organisations with well-trained personal and plenty resources may be more successful in using AI-drive tools to gain an edge over attackers in the lights of evolving threats.32

Highlighted reading: AI in cyber defence

Machine Learning and Cybersecurity: Hype and Reality33

Micah Musser & Ashton Garriott (Center for Security and Emerging Technology)

June 2021

Micah Musser & Ashton Garriott report that machine learning (ML) has the potential to enhance threat detection and automate various defensive cybersecurity tasks; however, most current applications are incremental improvements rather than revolutionary breakthroughs. Instead, most current applications are refinements or extensions of existing methods, such as tools for spam detection, intrusion detection, and malware detection, rather than revolutionary changes in cyber defence. Yet Musser and Garriot highlight that more recent innovations in ML architectures (such as generative adversarial network or GANs, and LLMs) appear to drive new ways of expanding cybersecurity abilities. While ML is unlikely to dramatically shift the overall offense-defense balance in cybersecurity, it may influence the strategies preferred by both attackers and defenders.

AI and the Future of Cyber Competition34

Wyatt Hoffman (Center for Security and Emerging Technology)

January 2021

In this report, Wyatt Hoffman argues that ML may intensify cyber competition rather than resolve it. Using ML in cyber defence introduces new vulnerabilities that adversaries can exploit, and flaws from within the ML systems cannot be patched like traditional software, making ML systems susceptible to deception and manipulation. Attackers may need early and deep access to target networks to reverse-engineer or sabotage ML systems during development, enabling them to craft attacks that deceive specific ML defenses. Defending against deceptive attacks requires continuous intelligence gathering on attackers’ capabilities, potentially necessitating intrusions into adversaries’ networks. In the end, the increasing use is likely to further destabilise cyber competition.

3. Both the use of AI models and the outputs they generate can create new cybersecurity vulnerabilities.

A third theme that often appears in think tank reporting is the inherent vulnerability of AI systems and the outputs they produce.35 As Micah Musser et al. write in a CSET publication, attacks on AI systems - also known as Adversarial AI - are not new, they are part of a longstanding trend of threat actors trying to manipulate algorithmic systems, such as spam filters and recommendation engines. However, what is new is the sharp rise in the use of ML, especially deep learning (DL), in high-risk applications, making these systems more attractive and vulnerable to attack.36

Adversarial AI exploits structural weaknesses in the design, training, and operational use of AI systems. This happens in two primary ways: one is data poisoning, where attackers insert harmful or misleading data into the training set to corrupt the model's learning. Such poisoning attacks aim to bias learning outcomes, causing models to behave unpredictably or erroneously once deployed.37 The other is through adversarial inputs, where attackers create specially designed inputs that cause the AI to make incorrect decisions after it's been trained and instead serve the attacker’s goals.38 This technique exploits, for example, the way AI systems define objectives or manipulating reward functions in reinforcement learning systems to produce unintended behaviours. Unlike traditional cyberattacks caused by coding errors, AI attacks exploit built-in weaknesses in AI algorithms that currently cannot be fully fixed.39 They are rooted in data and model behaviour, making them harder to address.40 Patching AI models often requires costly retraining, and may reduce model performance on normal inputs.41

While adversarial AI highlights the risks of exploiting AI models directly, another growing area of concern is the security implications of AI-generated content, particularly code. As AI tools, such as GitHub Copilot, are increasingly used to write software, they introduce new vulnerabilities that attackers can exploit. Jessica Ji, Jenny Jun, Maggie Wu and Rebecca Gelles from CSET performed a comparative evaluation of several LLMs and found that only about 30% of AI-generated code snippets were secure, with memory-related bugs (e.g., buffer overflows, dereferencing errors) among the most common.42 Moreover, the report highlights insecure AI-generated code may enter public repositories or production systems, be used as training data in AI systems' training, and as such causing next generation models to learn flawed practices, creating a feedback loop where systemic vulnerabilities are amplified over time.43 Under current lacking evaluation and benchmarking standards, ensuring the security of AI-generated code will likely require significant human oversight, which could limit the efficiency gains from using LLMs at scale in software development.44

The policy research space highlights mitigation to be crucial. For example, Marcus Comiter, Micah Musser, as well as Andrew Lohn and Wyatt Hoffman, all highlight in separate publications the importance of identifying vulnerable systems early. As AI’s vulnerabilities are deeply embedded in AI’s design, systems risk manipulation at various stages of their lifecycle. They are therefore, by nature, harder to patch than traditional software bugs. Moreover, since ML models are often customized per user, vulnerabilities and fixes can be unique and harder to manage. Finally, proof-of-concept attacks on ML systems are usually less reliable and practical than traditional software exploits.45

Highlighted reading: the cybersecurity of AI systems

Attacking Artificial Intelligence: AI’s Security Vulnerability and What Policymakers Can Do About It46

Marcus Comiter (Belfer Center for Science and International Affairs)

August 2019

In this report, Marcus Comiter provides insight into the unique cybersecurity vulnerabilities inherent to AI systems, highlighting how “AI attacks” exploit fundamental algorithmic weaknesses rather than traditional coding flaws. AI attacks expand the range of possible attack methods, requiring new ways of handling data collection, storage, and use. Five key areas are most at risk: content filters, the military, law enforcement, AI-replaced human tasks, and civil society. Comiter proposes “AI Security Compliance” programs to reduce attack risks by promoting best practices like threat planning, IT reforms, and response strategies. These programs, modeled on industry standards and enforced by relevant regulators, would require mandatory compliance for government and high-risk applications, while remaining optional for lower-risk private uses to balance security and innovation.

Discussion

Think-tank analyses converge on a single conclusion: artificial intelligence is accelerating familiar cyber operations - phishing, vulnerability discovery, social engineering, malware - rather than creating novel threat classes. Yet, it is also noted that the same acceleration enlarges the defender’s burden. Continuous AI-driven reconnaissance means vulnerabilities must be closed almost as soon as they appear, and detection becomes harder as synthetic media and AI-generated network traffic blur behavioural signals. Defensive models themselves are brittle: distributional shift, data poisoning, and adversarial prompts can blind them unless they are constantly red-teamed and retrained.

The think-tank literature yields several notable recommendations. The first recommendation is to validate before deployment. All AI-enabled defensive tools should be treated as experimental until they clear rigorous stress testing. Red-team exercises simulating current adversary tradecraft, followed by independent audits, help reveal brittleness and prevent unproven systems from introducing new blind spots.

The second key recommendation that emerges is that organisations should operate on the assumption of periodic compromise. Least-privilege architectures, real-time monitoring, automated containment, and rehearsed incident-response playbooks need to replace reliance on signature-based tools and episodic patching.

Third, think-tank reports emphasize that the most detailed evidence on AI-enabled attacks - telemetry, incident forensics, model-failure logs, and near-miss reports - sits largely in private-sector hands. Cloud providers, managed-security services, and major software firms see a scale and variety of attacks that governments and academia rarely match. Without structured access to that data, policymakers and researchers risk basing guidance on anecdotes rather than statistically robust findings. Accordingly, “expanding cross-sector information sharing” means building channels (technical, legal, and organisational) that let firms contribute relevant, sanitised data while protecting customer privacy, commercial sensitivities, and liability positions.

Notes and References

- Milmo, Dan. ‘UK Engineering Firm Arup Falls Victim to £20m Deepfake Scam’. The Guardian, 17 May 2024, sec. Technology. https://www.theguardian.com/technology/article/2024/may/17/uk-engineering-arup-deepfake-scam-hong-kong-ai-video.

- This means we excluded policy-focused pieces from industry as well as academic literature in policy studies.

- McGann, James G. ‘2020 Global Go To Think Tank Index Report’,. University of Pennsylvania, 28 January 2021. https://repository.upenn.edu/entities/publication/9f1730fa-da55-40bd-a1f4-1c2b2346b753.

- Established think tanks here refers to institutions with a long-standing presence and recognized influence in the security policy space, often characterised by sizable resources.

- This comes with a caveat that the analysis is based on English-language publications produced by policy think tanks. Broader trends in other institutional or linguistic contexts may not be fully captured.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Musser, Micah, and Ashton Garriott. ‘Machine Learning and Cybersecurity: Hype and Reality’. Center for Security and Emerging Technology, June 2021. https://cset.georgetown.edu/publication/machine-learning-and-cybersecurity/.

- Musser and Garriott for example use the phrasing ‘incremental.’ Musser and Garriott, 33.

- Multiple sources:

- Pupillo, Lorenzo, Stefano Fantin, Afonso Ferreira, and Carolina Polito. ‘Artificial Intelligence and Cybersecurity - Technology, Governance and Policy Challenges: Report of a CEPS Task Force’. Centre for European Policy Studies, 28 May 2021. https://www.ceps.eu/ceps-publications/artificial-intelligence-and-cybersecurity-2/.

- Musser, Micah, and Ashton Garriott. ‘Machine Learning and Cybersecurity: Hype and Reality’. Center for Security and Emerging Technology, June 2021. https://cset.georgetown.edu/publication/machine-learning-and-cybersecurity/.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Instead of relying only on human-coded rules, ML systems learn from data without explicit programming, and subsequently adapt over time to improve their performance. Because of these characteristics, ML is very useful to cyber adversaries in areas where there’s lots of data and a clear objective, like fuzzing but also cracking passwords. Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Multiple sources:

- Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Pupillo, Lorenzo, Stefano Fantin, Afonso Ferreira, and Carolina Polito. ‘Artificial Intelligence and Cybersecurity - Technology, Governance and Policy Challenges: Report of a CEPS Task Force’. Centre for European Policy Studies, 28 May 2021. https://www.ceps.eu/ceps-publications/artificial-intelligence-and-cybersecurity-2/.

- Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Multiple sources:

- Roba Abbas et al., ‘Artificial Intelligence (AI) in Cybersecurity: A Socio-Technical Research Roadmap’ (The Alan Turing Institute, October 2023), https://www.turing.ac.uk/news/publications/artificial-intelligence-ai-cybersecurity-socio-technical-research-roadmap.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Multiple sources:

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Hamin, Maia and Stewart Scott, ‘Hacking with AI’ (Atlantic Council, 15 February 2024), 22–23, https://www.atlanticcouncil.org/in-depth-research-reports/report/hacking-with-ai/.

- King, Meg, and Jacob Rosen. ‘The Real Challenges of Artificial Intelligence: Automating Cyber Attacks’. Wilson Center. CTRL Forward (blog), 28 November 2018. https://www.wilsoncenter.org/blog-post/the-real-challenges-artificial-intelligence-automating-cyber-attacks.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Ibid.

- Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Hamin, Maia, and Stewart Scott. ‘Hacking with AI’. Atlantic Council, 15 February 2024. https://www.atlanticcouncil.org/in-depth-research-reports/report/hacking-with-ai/.

- King, Meg, and Jacob Rosen. ‘The Real Challenges of Artificial Intelligence: Automating Cyber Attacks’. Wilson Center. CTRL Forward (blog), 28 November 2018. https://www.wilsoncenter.org/blog-post/the-real-challenges-artificial-intelligence-automating-cyber-attacks.

- Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Hamin, Maia, and Stewart Scott. ‘Hacking with AI’. Atlantic Council, 15 February 2024. https://www.atlanticcouncil.org/in-depth-research-reports/report/hacking-with-ai/.

- Multiple sources:

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Devanny, Joe. ‘Foreign Ministries and Cyber Power: Implications of Artificial Intelligence’. The Royal United Services Institute, 21 July 2023. https://www.rusi.org/explore-our-research/publications/commentary/foreign-ministries-and-cyber-power-implications-artificial-intelligence.

- Hoffman, Wyatt. ‘AI and the Future of Cyber Competition’. Center for Security and Emerging Technology, January 2021. https://cset.georgetown.edu/publication/ai-and-the-future-of-cyber-competition/.

- Kaushik, Anushka. ‘Leveraging Artificial Intelligence for NATO’s Cyber Resilience: Preliminary Perspectives’. GLOBSEC, 21 February 2025. https://www.globsec.org/what-we-do/publications/leveraging-artificial-intelligence-natos-cyber-resilience-preliminary.

- Tang, Saada and Kelly particularly mention an “arms race,” whereas Devanny, as well as Hoffman, point towards the existence of a “race.”

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Devanny, Joe. ‘Foreign Ministries and Cyber Power: Implications of Artificial Intelligence’. The Royal United Services Institute, 21 July 2023. https://www.rusi.org/explore-our-research/publications/commentary/foreign-ministries-and-cyber-power-implications-artificial-intelligence.

- Hoffman, Wyatt. ‘AI and the Future of Cyber Competition’. Center for Security and Emerging Technology, January 2021. https://cset.georgetown.edu/publication/ai-and-the-future-of-cyber-competition/.

- Musser, Micah, and Ashton Garriott. ‘Machine Learning and Cybersecurity: Hype and Reality’. Center for Security and Emerging Technology, June 2021. https://cset.georgetown.edu/publication/machine-learning-and-cybersecurity/.

- Multiple sources:

- Pupillo, Lorenzo, Stefano Fantin, Afonso Ferreira, and Carolina Polito. ‘Artificial Intelligence and Cybersecurity - Technology, Governance and Policy Challenges: Report of a CEPS Task Force’. Centre for European Policy Studies, 28 May 2021. https://www.ceps.eu/ceps-publications/artificial-intelligence-and-cybersecurity-2/.

- Hoffman, Wyatt. ‘Making AI Work for Cyber Defense: The Accuracy-Robustness Tradeoff’. Center for Security and Emerging Technology, December 2021. https://cset.georgetown.edu/publication/making-ai-work-for-cyber-defense/.

- King, Meg, and Jacob Rosen. ‘The Real Challenges of Artificial Intelligence: Automating Cyber Attacks’. Wilson Center. CTRL Forward (blog), 28 November 2018. https://www.wilsoncenter.org/blog-post/the-real-challenges-artificial-intelligence-automating-cyber-attacks.

- Multiple sources:

- King, Meg, and Jacob Rosen. ‘The Real Challenges of Artificial Intelligence: Automating Cyber Attacks’. Wilson Center. CTRL Forward (blog), 28 November 2018. https://www.wilsoncenter.org/blog-post/the-real-challenges-artificial-intelligence-automating-cyber-attacks.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Tang, Jennifer, Tiffany Saade, and Steve Kelly. ‘The Implications of Artificial Intelligence in Cybersecurity: Shifting the Offense-Defense Balance’. Institute for Security and Technology, October 2024. https://securityandtechnology.org/virtual-library/reports/the-implications-of-artificial-intelligence-in-cybersecurity/.

- Steier, Joshua, Erik Van Hegewald, Anthony Jacques, Gavin S. Hartnett, and Lance Menthe. ‘Understanding the Limits of Artificial Intelligence for Warfighters: Volume 2, Distributional Shift in Cybersecurity Datasets’. RAND, 3 January 2024. https://www.rand.org/pubs/research_reports/RRA1722-2.html.

- Buchanan, Ben, John Bansemer, Dakota Cary, Jack Lucas, and Micah Musser. ‘Automating Cyber Attacks: Hype and Reality’. Center for Security and Emerging Technology, November 2020. https://cset.georgetown.edu/publication/automating-cyber-attacks/.

- Musser, Micah, and Ashton Garriott. ‘Machine Learning and Cybersecurity: Hype and Reality’. Center for Security and Emerging Technology, June 2021. https://cset.georgetown.edu/publication/machine-learning-and-cybersecurity/.

- Hoffman, Wyatt. ‘AI and the Future of Cyber Competition’. Center for Security and Emerging Technology, January 2021. https://cset.georgetown.edu/publication/ai-and-the-future-of-cyber-competition/.

- Multiple sources:

- Comiter, Marcus. ‘Attacking Artificial Intelligence’. Belfer Center for Science and International Affairs, August 2019. https://www.belfercenter.org/sites/default/files/pantheon_files/2019-08/AttackingAI/AttackingAI.pdf.

- Lohn, Andrew, and Wyatt Hoffman. ‘Securing AI: How Traditional Vulnerability Disclosure Must Adapt’. Center for Security and Emerging Technology, March 2022. https://cset.georgetown.edu/publication/securing-ai-how-traditional-vulnerability-disclosure-must-adapt/.

- Zheng, Christopher. ‘The Cybersecurity Vulnerabilities to Artificial Intelligence’. Council on Foreign Relations. Net Politics (blog), 28 August 2017. https://www.cfr.org/blog/cybersecurity-vulnerabilities-artificial-intelligence.

- Ji, Jessica, Jenny Jun, Maggie Wu, and Rebecca Gelles. ‘Cybersecurity Risks of AI-Generated Code’. Center for Security and Emerging Technology, November 2024. https://cset.georgetown.edu/publication/cybersecurity-risks-of-ai-generated-code/.

- Musser, Micah, Jonathan Spring, Christina Liaghati, Daniel Rohrer, Jonathan Elliott, Rumman Chowdhury, Andrew Lohn, et al. ‘Adversarial Machine Learning and Cybersecurity: Risks, Challenges, and Legal Implications’. Center for Security and Emerging Technology, April 2023. https://cset.georgetown.edu/publication/adversarial-machine-learning-and-cybersecurity/.

- Multiple sources:

- Ji, Jessica, Jenny Jun, Maggie Wu, and Rebecca Gelles. ‘Cybersecurity Risks of AI-Generated Code’. Center for Security and Emerging Technology, November 2024. https://cset.georgetown.edu/publication/cybersecurity-risks-of-ai-generated-code/.

- Wolff, Josephine. ‘How to Improve Cybersecurity for Artificial Intelligence’. The Brookings Institution, 9 June 2020. https://www.brookings.edu/articles/how-to-improve-cybersecurity-for-artificial-intelligence/.

- Comiter, Marcus. ‘Attacking Artificial Intelligence’. Belfer Center for Science and International Affairs, August 2019. https://www.belfercenter.org/sites/default/files/pantheon_files/2019-08/AttackingAI/AttackingAI.pdf.

- Hoffman, Wyatt. ‘Making AI Work for Cyber Defense: The Accuracy-Robustness Tradeoff’. Center for Security and Emerging Technology, December 2021. https://cset.georgetown.edu/publication/making-ai-work-for-cyber-defense/.

- Multiple sources:

- Lohn, Andrew, and Wyatt Hoffman. ‘Securing AI: How Traditional Vulnerability Disclosure Must Adapt’. Center for Security and Emerging Technology, March 2022. https://cset.georgetown.edu/publication/securing-ai-how-traditional-vulnerability-disclosure-must-adapt/.

- Lohn, Andrew. ‘Poison in the Well’ (Center for Strategic and International Studies, June 2021), https://cset.georgetown.edu/publication/poison-in-the-well/.

- Musser, Micah, Jonathan Spring, Christina Liaghati, Daniel Rohrer, Jonathan Elliott, Rumman Chowdhury, Andrew Lohn, et al. ‘Adversarial Machine Learning and Cybersecurity: Risks, Challenges, and Legal Implications’. Center for Security and Emerging Technology, April 2023. https://cset.georgetown.edu/publication/adversarial-machine-learning-and-cybersecurity/.

- Ji, Jessica, Jenny Jun, Maggie Wu, and Rebecca Gelles. ‘Cybersecurity Risks of AI-Generated Code’. Center for Security and Emerging Technology, November 2024. https://cset.georgetown.edu/publication/cybersecurity-risks-of-ai-generated-code/.

- Ibid.

- Hamin, Maia, Jennifer Lin, and Trey Herr. ‘AI in Cyber and Software Security: What’s Driving Opportunities and Risks?’ Atlantic Council, 19 August 2024. https://www.atlanticcouncil.org/in-depth-research-reports/issue-brief/ai-in-cyber-and-software-security-whats-driving-opportunities-and-risks/.

- Multiple sources:

- Musser, Micah, Jonathan Spring, Christina Liaghati, Daniel Rohrer, Jonathan Elliott, Rumman Chowdhury, Andrew Lohn, et al. ‘Adversarial Machine Learning and Cybersecurity: Risks, Challenges, and Legal Implications’. Center for Security and Emerging Technology, April 2023. https://cset.georgetown.edu/publication/adversarial-machine-learning-and-cybersecurity/.

- Lohn, Andrew, and Wyatt Hoffman. ‘Securing AI: How Traditional Vulnerability Disclosure Must Adapt’. Center for Security and Emerging Technology, March 2022. https://cset.georgetown.edu/publication/securing-ai-how-traditional-vulnerability-disclosure-must-adapt/.

- Comiter, Marcus. ‘Attacking Artificial Intelligence’. Belfer Center for Science and International Affairs, August 2019. https://www.belfercenter.org/sites/default/files/pantheon_files/2019-08/AttackingAI/AttackingAI.pdf.